Source: Walrus

AI agents are evolving toward autonomously handling increasingly complex workflows, yet the infrastructure governing what these agents actually remember between sessions remains unsettled. MemWal, a project emerging from the Walrus ecosystem, sets out to solve this problem on top of decentralized storage. It is currently available in beta.

Agent memory has recently surfaced as a critical infrastructure concern. For an agent to move beyond one-off prompt responses and become a persistently operating system, it needs to retain conversation history, learned skills, reasoning traces, and workflow outputs across sessions.

The way most developers address this today is by cobbling together existing infrastructure. Session state goes into Redis, files into S3, embeddings into a vector database. Each piece works in isolation, but none was designed to treat agent memory as a system primitive. The result is memory scattered across multiple systems, difficult to verify, and hard to keep consistent over time.

Dedicated solutions that put this problem front and center already exist. Mem0 has raised $24M, was selected as the official memory provider for the AWS Agent SDK, and offers a dual-storage architecture combining vector embeddings with a graph database. Letta (formerly MemGPT) takes an OS-metaphor approach where the LLM itself manages memory. Other competitors include LangMem, Zep, MemMachine, and more.

But these solutions share a common premise: the memory data ultimately lives on centralized infrastructure (AWS, GCP, self-hosted servers). In scenarios where agents make autonomous decisions and move real assets, having to trust a third party for the provenance and integrity of that memory can become a structural limitation.

MemWal starts from this observation and designs a structure where agent memory is stored on Walrus's decentralized storage while ownership and access control are managed on-chain through Sui.

Developers attach memory to their agents via the SDK, and a backend relayer handles the actual interactions with Walrus and Sui. The relayer can be an existing hosted instance or self-hosted.

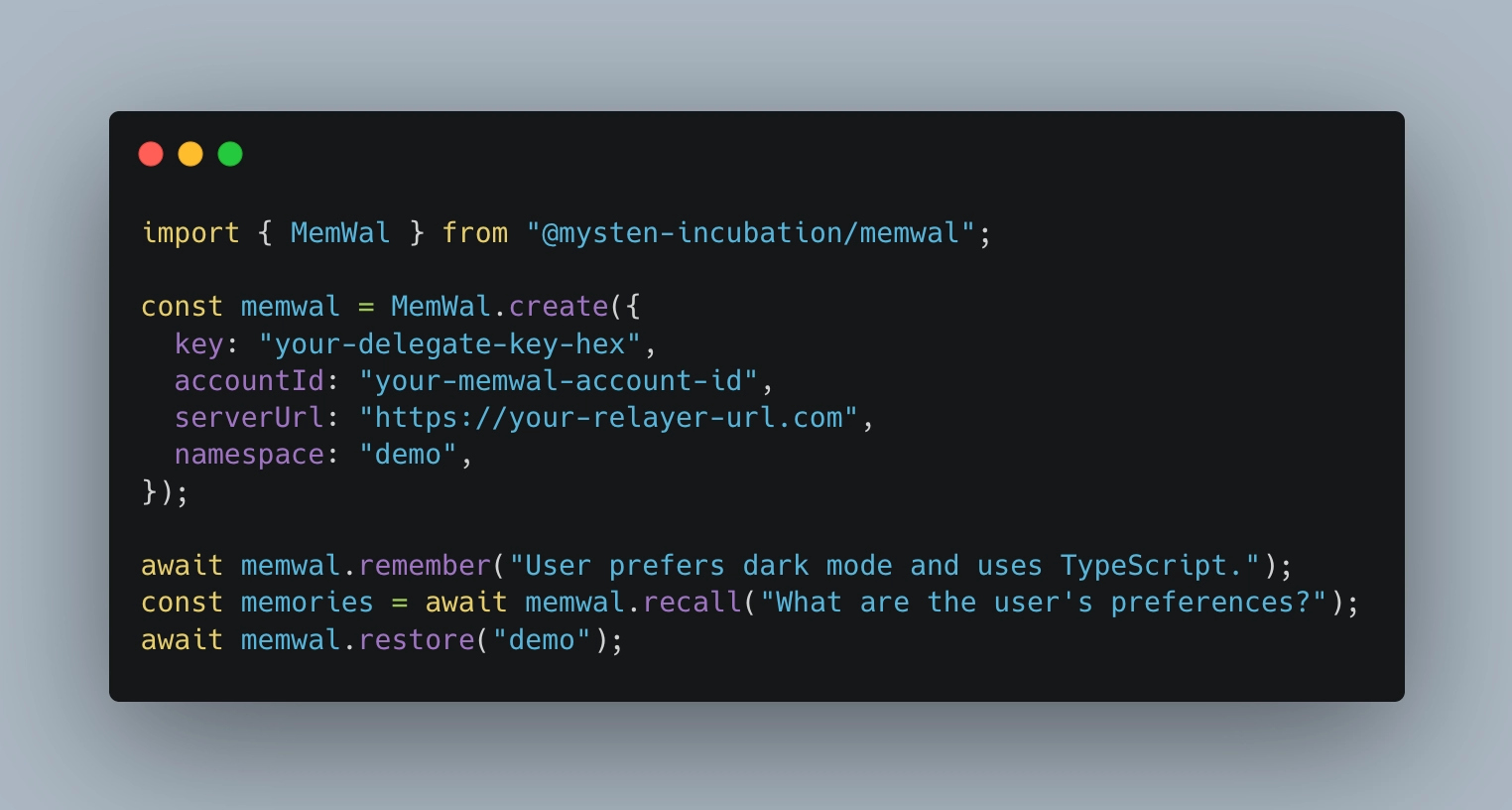

The SDK's core API can be integrated as simply as the following.

Behind the straightforward interface of remember, recall, and restore, the relayer handles a considerable amount of work. According to the MemWal repository, the relayer is responsible for embedding generation, encryption via Seal, upload and download to Walrus, PostgreSQL storage for vector metadata, semantic search, and namespace restoration.

Breaking down the four core features mentioned in the blog post and cross-referencing them with the code:

Structured Memory Spaces: Agent memory is organized not as unstructured logs but as purpose-built structured containers. This resembles how existing memory solutions distinguish between semantic (facts/preferences), episodic (past experiences), and procedural (behavioral patterns) memory, but differs in that the storage layer itself natively supports these distinctions. A namespace parameter separates memory into purpose-specific containers, with each use case operating in its own independent memory space.

Flexible Ownership Models: Who owns, controls, and holds memory can be defined at the user, agent, or application level. All operations are scoped by owner + namespace, and ownership is managed on top of Sui's object model.

Programmable Access Control: Fine-grained permissions can be set for reading, writing, and sharing memory, with threshold encryption via Seal built in by default. In manual mode, developers can control Seal encryption directly and program access conditions through Move contracts.

Typed Memory Systems: Different memory types such as conversations, checkpoints, and reasoning traces are natively distinguished.

MemWal is not a product competing at the same level as Mem0 or Letta. Mem0 uses LLMs to extract key facts from conversations, models entity relationships through a graph-based approach, and delivers sub-50ms search latency.

What MemWal actually provides is closer to "memory infrastructure" than "memory intelligence." Specifically, three aspects set it apart from existing solutions.

Verifiability: All data stored on Walrus has availability proofs recorded on the Sui chain. It becomes possible to verify after the fact which memory an agent referenced when making a decision, and whether that memory was tampered with. With centralized Redis or S3, you have to trust the operator; with Walrus, guarantees come at the protocol level.

On-chain Ownership Management: Memory access rights are managed not through API keys or server configurations but through Sui's object ownership model. This means scenarios like cross-agent memory sharing, memory transfers, or conditional access to memory can be programmed via smart contracts.

Decentralized Storage: Memory data does not depend on a single server or cloud provider. Walrus distributes data across approximately 2,200 storage nodes using erasure coding, so data availability is maintained even if some nodes go down.

The scenarios where MemWal's differentiators genuinely shine are those that simultaneously demand trustworthiness and shareability in agent memory.

Multi-agent Systems: In architectures where multiple agents collaborate through shared memory, which agent wrote and read which memory can be tracked on-chain. Solutions like Mem0 support multi-tenancy as well, but programmably defining memory ownership across agents is a different problem.

Auditable Decision-Making: When an agent executes financial transactions or makes sensitive decisions, it should be possible to prove after the fact what memory the agent relied on at what point in time. When memory is stored on Walrus and proofs are recorded on Sui, this kind of audit trail becomes feasible.

Privacy-Sensitive Memory: In medical, legal, and financial domains where agents need to retain sensitive information, Seal encryption allows memory to be stored in encrypted form, with decryption restricted to parties that meet specific conditions. The fact that even the server operator cannot view the contents provides a fundamentally different trust model from centralized memory solutions.

Agent Memory Portability: There may be scenarios where an agent is migrated to a different platform, or memory is transferred to another agent. When memory is not locked into a particular vendor's infrastructure but sits on a decentralized protocol, such portability becomes possible at the protocol level.

Conversely, for typical chatbot or assistant scenarios where a single agent needs fast semantic search and intelligent memory management, Mem0 or Letta is likely the more practical choice at this point.

MemWal is part of a broader effort to position Walrus beyond simple file storage and toward becoming the memory layer for AI agents. One precedent is elizaOS V2, which already integrates Walrus as its default memory layer; MemWal takes this direction further by materializing it into a more general-purpose SDK.

Of course, the information publicly available so far is not sufficient to fully assess MemWal's technical depth. Details like the SDK's API design, memory query mechanisms, relayer implementation specifics, and the practical constraints imposed by Walrus storage costs will require firsthand examination of the documentation and code.

The agent memory market is growing rapidly, and the core competition is playing out in the domain of how intelligently a system can remember. MemWal introduces a different axis: how reliably a system can remember. Whether this axis gains real traction in the market ultimately depends on two things. The first is how quickly the number of cases grows where agents handle real assets and high-stakes decisions. The second is how well MemWal can build intelligent memory management capabilities on top of verifiable storage.