Ethereum's P2P network performance is determined not by peer count, but by the quality of mesh composition, and nodes in regions with low node density face structural disadvantages. GossipSub forms a mesh of just 6 to 12 peers per topic, and peer scoring causes high-latency nodes to fall into a vicious cycle of mesh exclusion. In node-dense regions like Europe and North America, nearby peers boost each other's scores in a virtuous cycle, while nodes in Asia, South America, and Africa can be pushed out of the mesh and remain periphery peers even with the same number of connections.

Upcoming Ethereum roadmap changes including FOCIL, PeerDAS, and slot time reduction will further strengthen the need for geographic diversity. FOCIL's censorship resistance relies on the geographic diversity of its 17-member committee, the evolution from PeerDAS to Full DAS depends even more heavily on the geographic distribution of data columns, and shorter slot times amplify the impact of regional latency gaps.

Running validators in non-Western regions requires more effort, but operators can contribute to the global balance of GossipSub meshes through forming regional node clusters, leveraging DVT, and engaging in governance. At the same time, major staking pools like Lido should prioritize geographic diversity in operator onboarding, and the Ethereum protocol itself must evolve to lower barriers to entry for non-Western regions. Meaningful geographic diversity requires the combined efforts of individual node operators, major staking pools and institutional stakers, and protocol designers.

Running over 25,000 Ethereum validators(800K~ $ETH) in Asia, the task I spent the most effort and time on was pushing attestation accuracy to barely above average. Increasing peer count, upgrading node hardware, setting up static peering, and choosing clients less sensitive to latency were not fundamental solutions. In Ethereum's consensus layer P2P, what matters more than raw peer count is improving your peers' scores so that your node gets included in the mesh for key topics. Regardless, validators in non-Western regions have to spend more effort and cost. The easiest solution would have been simply "moving the nodes to a European or US region," but I tried not to make that choice. As a participant in the Ethereum network, one should do what they can to help the network achieve its stated goals. Ethereum is a Peer-to-Peer network before it is a block+chain. The root cause of most network constraints and node operation issues lies in the communication process between peers. Let's explore how that communication works, why geographic distribution of peers matters, and what is going to change going forward.

The title of the Bitcoin whitepaper is "Bitcoin: A Peer-to-Peer Electronic Cash System." (In fact, the word "blockchain" does not appear anywhere in the Bitcoin whitepaper.) The origin and essence of blockchain is Peer-to-Peer (P2P). In other words, the distribution of peers (nodes) is the core of blockchain's distributed system.

Yet when we discuss blockchain performance, topics like parallel execution, consensus algorithm improvements, and TPS are actively covered, while discussions about the P2P network itself are relatively scarce. Even node operators participating as Ethereum validators often do not fully understand how Ethereum's P2P network works. But this invisible layer is the physical foundation that underpins blockchain's decentralization and censorship resistance, and it deserves more attention.

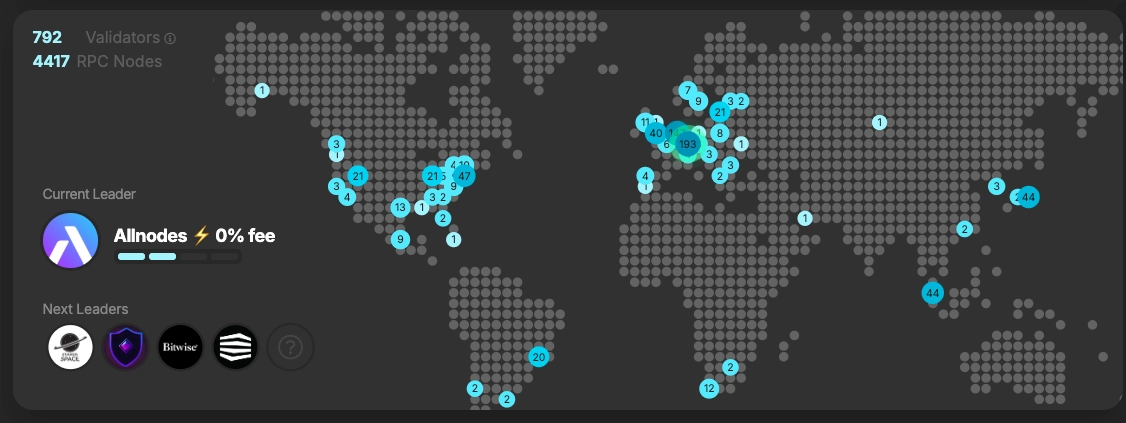

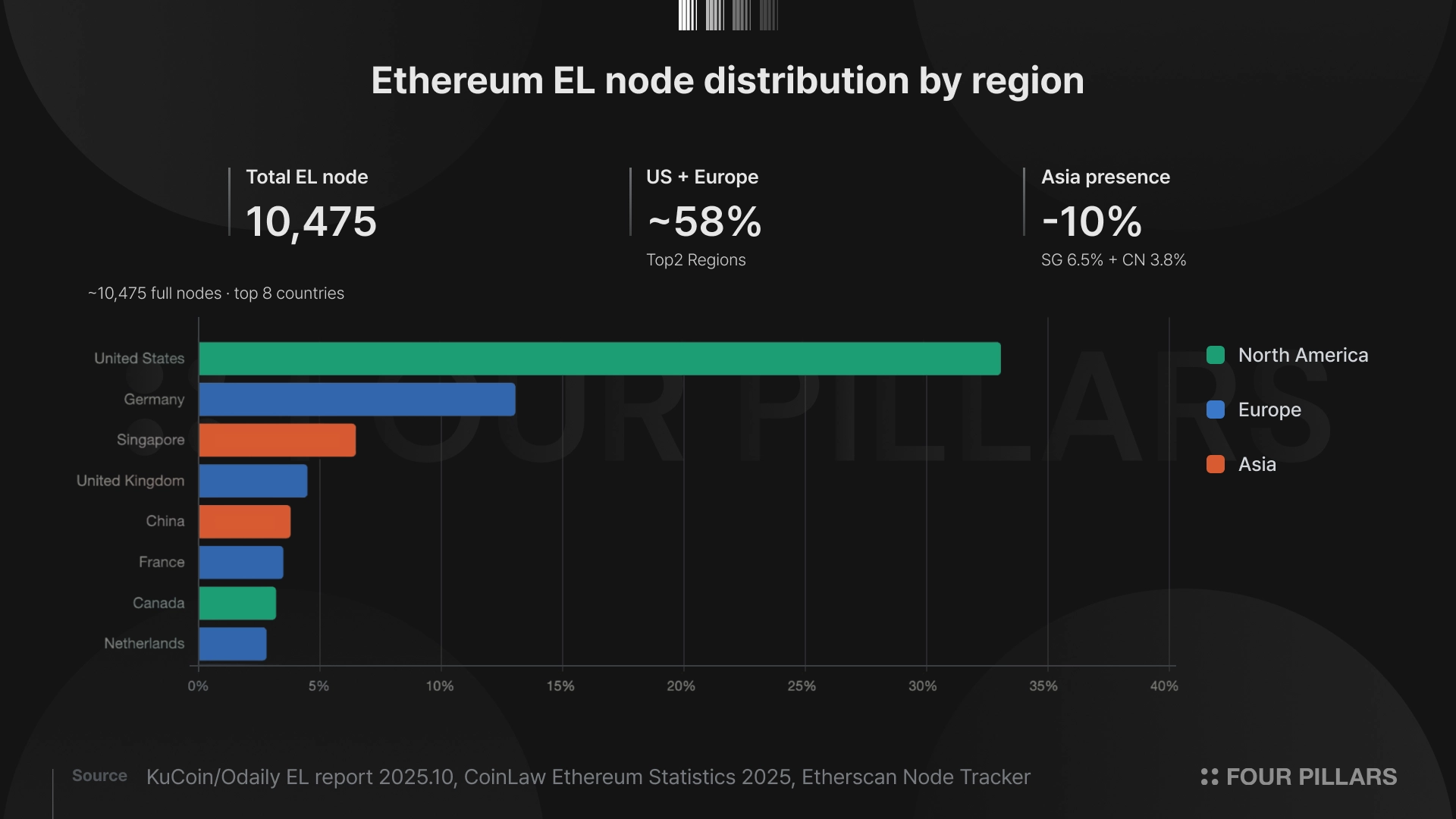

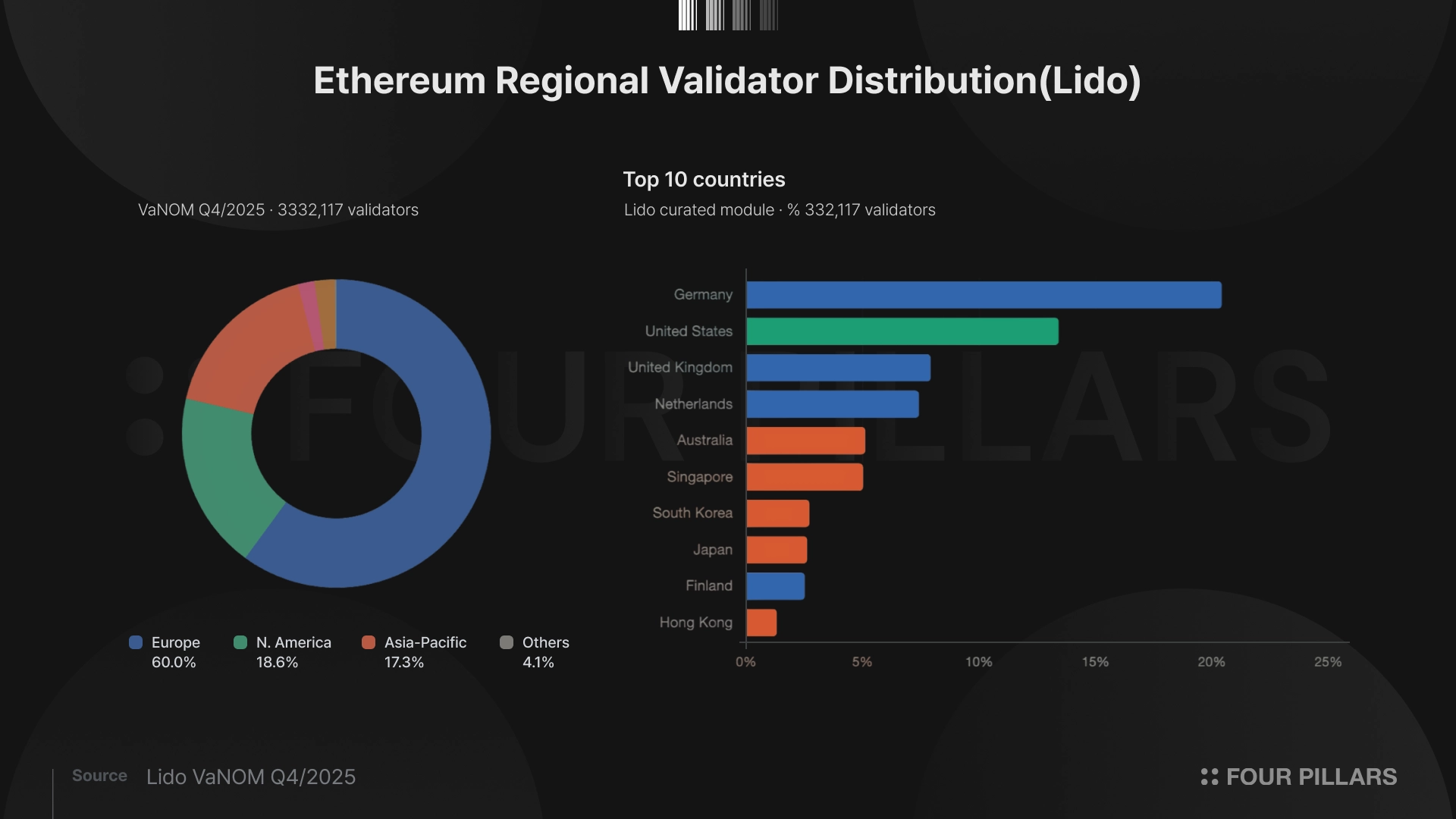

Ethereum is a massive distributed computer powered by over 11,000 full nodes and more than 1 million validators worldwide. However, behind these numbers lies a geographic imbalance. On the execution layer (EL), the top 3 countries alone account for more than half of all nodes. Based on Lido operator data, Europe and North America together host roughly 80% of validators. Asia, home to 60% of the world's population, and South America and Africa, where crypto adoption is growing fastest, remain effectively on the periphery of the Ethereum network.

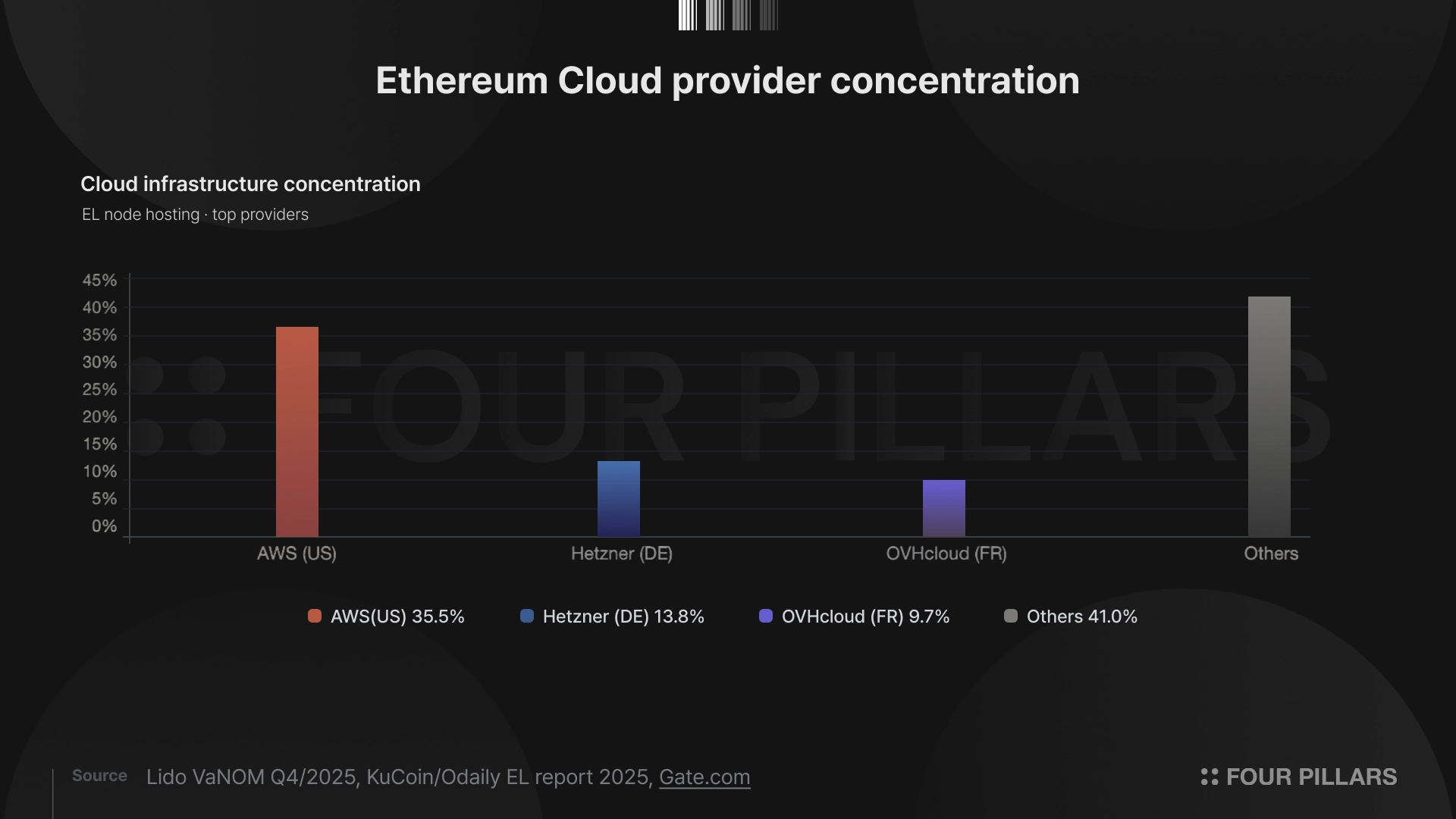

When discussing blockchain's technical decentralization, we tend to focus on "number of validators" or "client diversity." These are certainly important. But equally important is geographic distribution. If nodes are concentrated in a specific region, jurisdiction, or cloud provider under a particular government's influence, Ethereum's P2P network loses the diversity its design presupposes, and the core values of censorship resistance and network resilience are threatened.

This article takes a deep look at how Ethereum's P2P network operates. We expect it to serve as a useful reference for those looking to improve node performance when running Ethereum validators or full nodes. We then analyze why the structure of Ethereum's P2P network was designed with geographic diversity as a prerequisite, and explain what problems the current peer composition poses for achieving the "world computer" vision. Furthermore, we examine how this landscape may change from the perspective of the Strawmap roadmap through 2029, and how major staking pools and node operators can contribute to improvement.

Ethereum's networking layer is not a single protocol. Two P2P stacks operate in parallel, each serving the execution layer (EL) and consensus layer (CL) respectively. Let's examine what characteristics matter for each layer from a P2P perspective.

If you are already familiar with Ethereum's P2P network or prefer to skip the deep technical content, you can jump directly to "3. How Geographic Distribution of Peers Affects Network Performance." However, we recommend reading "2.2 libp2p, the Consensus Layer's Messaging Infrastructure" at least once for a clearer understanding of the issues discussed later.

The execution layer (EL) handles transaction processing and state management. It collects and propagates transactions from the mempool, executes smart contracts, and maintains Ethereum's global state.

From a P2P perspective, what matters for the EL is fast transaction propagation and efficient synchronization of large state data. New transactions must spread quickly across the network for fair inclusion opportunities in blocks, and new nodes must be able to efficiently download Ethereum's hundreds-of-gigabytes state to keep the barrier to network participation low.

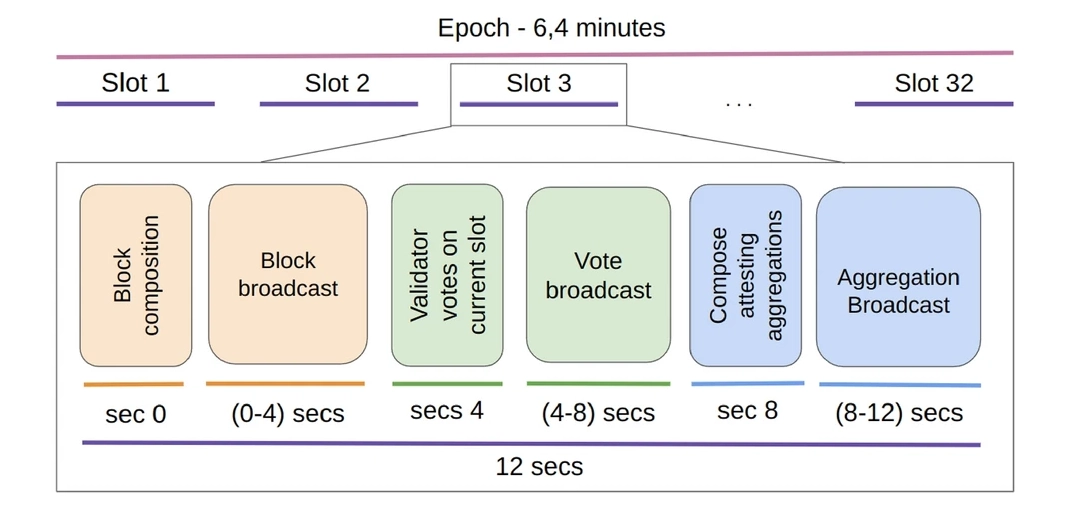

The consensus layer (CL) manages the consensus process that determines which blocks are included in the canonical chain. Validators propose blocks, send attestations, and aggregate them to determine the chain's head. From a P2P perspective, what matters for the CL is extremely tight message propagation within the 12 seconds slot. (This could be reduced to as little as 2 seconds under future roadmap plans.)

Source: The impact of connectivity and software in Ethereum validator performance

Block proposal, attestation voting, and aggregation must all complete within a single slot, so a message arriving just 0.5 seconds late can alter attestation accuracy. When attestation accuracy changes, validators receive penalties. A validator node's geographic location directly impacts staking APY. Moreover, with over 1 million validators participating simultaneously, topic-based routing for efficient distributed message processing is essential.

These two layers are connected via the Engine API on a single physical node, but their P2P communication occurs on completely independent networks. The EL uses the custom-designed devp2p stack, while the CL uses libp2p, a general-purpose framework developed by Protocol Labs. Let's examine each in detail.

devp2p is a P2P protocol stack designed from Ethereum's earliest days, still used as the networking foundation for all execution clients including Geth, Nethermind, and Besu. Its core components are the following three.

2.1.1 Node Discovery

Node discovery uses a modified Kademlia DHT-based discv4/discv5 protocol to find other nodes on the network. New nodes first connect to hardcoded bootnodes to receive a peer list, then autonomously discover peers through the DHT.

2.1.2 RLPx Transport Protocol

RLPx operates over TCP and initiates sessions via ECIES asymmetric encryption handshakes. All subsequent communication is encrypted with AES symmetric keys, and data is serialized using RLP (Recursive Length Prefix) encoding in a minimal structure. This design enables node operation even on residential internet connections.

2.1.3 Wire Protocol and Sub-protocols

On top of basic ping-pong and session management, sub-protocols like eth (transaction/block propagation) and snap (fast state synchronization) are layered. These handle transaction propagation, block synchronization, and state snapshot exchange.

In summary, devp2p provides a single stack covering the entire process from node discovery to secure connection (RLPx) to data exchange (wire protocol). While devp2p has been validated on Ethereum mainnet for nearly a decade, it is not suited for the real-time consensus messaging between large numbers of validators that the consensus layer (beacon chain) requires. This led the Ethereum community to adopt the open-source libp2p for the consensus layer.

In fact, the P2P network with greater impact on today's Ethereum is the consensus layer rather than the execution layer.

In 2018-2019, while designing the networking requirements for Ethereum 2.0 (now the consensus layer), the Ethereum community decided to adopt libp2p, an open-source framework developed by Protocol Labs, instead of the existing devp2p. libp2p is a modular networking framework that powers IPFS, separating transport, multiplexing, peer discovery, NAT traversal, and other functions into individual modules for flexible composition.

Communication handled by libp2p on the beacon chain is broadly divided into the gossip domain and the request-response domain. Of these, gossip is the core of consensus.

2.2.1 GossipSub: Topic-Based Self-Optimizing Mesh Network

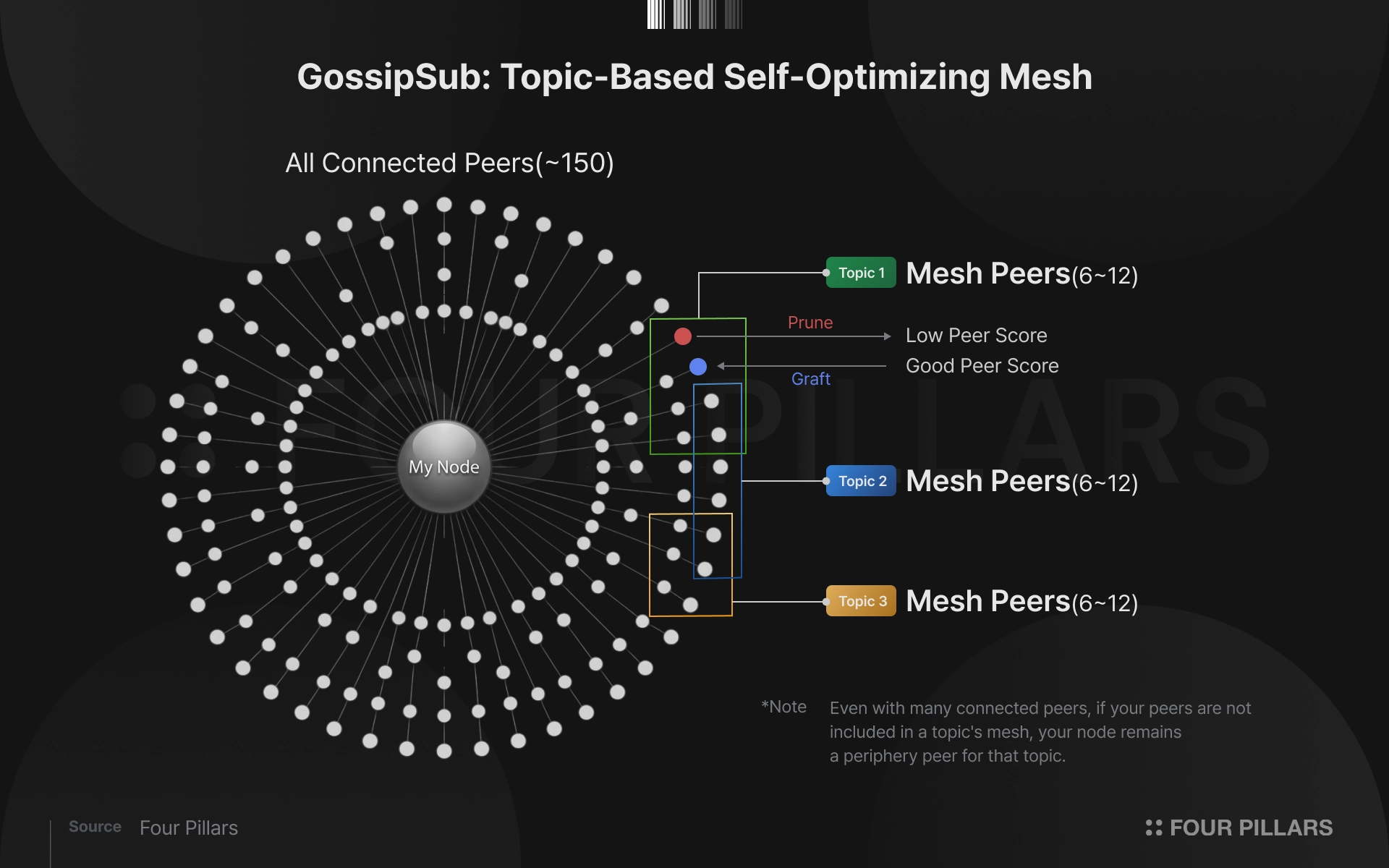

GossipSub is not a simple broadcast protocol. Its essence is a system that forms independent P2P overlays per topic. Each node "subscribes" to specific topics to participate in that topic's overlay, receiving and relaying messages within it.

GossipSub message propagation operates in two layers: the mesh layer and the gossip layer. In the mesh layer, a small number of peers selected per topic maintain tight connections for fast and reliable message delivery. In the gossip layer, periodic metadata exchange with peers outside the mesh ensures no messages are missed.

Understanding the mesh structure is key here. A single Ethereum CL node is typically connected to about 100 to 150 peers at the libp2p level. However, exchanging all messages across all topics with all peers in real time would cause excessive network load. So GossipSub selects only 6 to 12 peers per topic to form a mesh, and messages for that topic are exchanged directly only with these few mesh peers.

This is a critical point: no matter how many peers a node maintains, if the mesh composition for each topic (consisting of just 6 to 12 peers) is poor, the node cannot expect good performance.

2.2.2 Message Deduplication Mechanism (IHAVE/IWANT)

GossipSub uses control messages that indirectly share propagation state between peers via the IHAVE/IWANT pattern. The core mechanism works as follows: to peers outside the mesh, a node sends only IHAVE (a list of message IDs) saying "I have these messages," and the counterpart requests actual data via IWANT only when it lacks those messages. Peers that already have the messages do not send IWANT, significantly reducing unnecessary duplicate transmission. Each node stores received message IDs in a cache, ignoring and not re-propagating any message that arrives with a duplicate ID.

The beacon chain's GossipSub continuously optimizes mesh topology through heartbeats at 0.7-second intervals. At each heartbeat, a node evaluates peers' responsiveness and message delivery quality, pruning slow or uncooperative peers from the mesh and grafting better ones in. This is why inter-node latency matters so much in the beacon chain's P2P network.

2.2.3 (FYI) IDONTWANT (GossipSub v1.2)

Starting in late 2025, GossipSub v1.2 was adopted by major CL clients, adding the IDONTWANT control message. When a node has already received a specific message, it immediately tells mesh peers "do not send this message." While IHAVE/IWANT operates on heartbeat intervals, IDONTWANT acts instantaneously, dramatically reducing duplicate reception of large messages like blob data.

When discussing blob parameter increases (BPO) during the Fusaka hard fork, IDONTWANT's bandwidth savings were cited as one of the key justifications for expanding blob counts.

2.2.4 Peer Scoring: The Reputation System That Determines Mesh Membership

GossipSub v1.1 introduced a peer scoring system that evaluates each peer's behavior per topic. High-scoring peers receive priority in message propagation, while peers with sufficiently low scores are blacklisted. This scoring naturally favors long-maintained peer identities, increasing resistance to Sybil attacks where an adversary creates masses of fake nodes to disrupt the network.

When peer scoring meets the mesh structure, a critical dynamic of Ethereum's P2P emerges. A node being included in meshes across multiple topics means that peer's score is generally high. Peers that deliver messages quickly and accurately are preferred across various topics, increasing their probability of mesh inclusion. Conversely, as scores drop, a node gets pruned from multiple meshes and its participation scope shrinks.

In other words, even with many peers, a node with low scores may find itself excluded from the mesh for most topics, resulting in poor practical performance. It remains connected but becomes a "periphery peer" limited to IHAVE/IWANT exchanges in the gossip layer.

The main factors affecting peer score include first message delivery frequency, mesh participation time, and invalid message transmission count. Here, latency acts as a key variable. Nodes geographically far from network hubs structurally receive and deliver the same messages later than closer nodes. This systematically lowers their "first delivery" score and increases the probability of mesh exclusion.

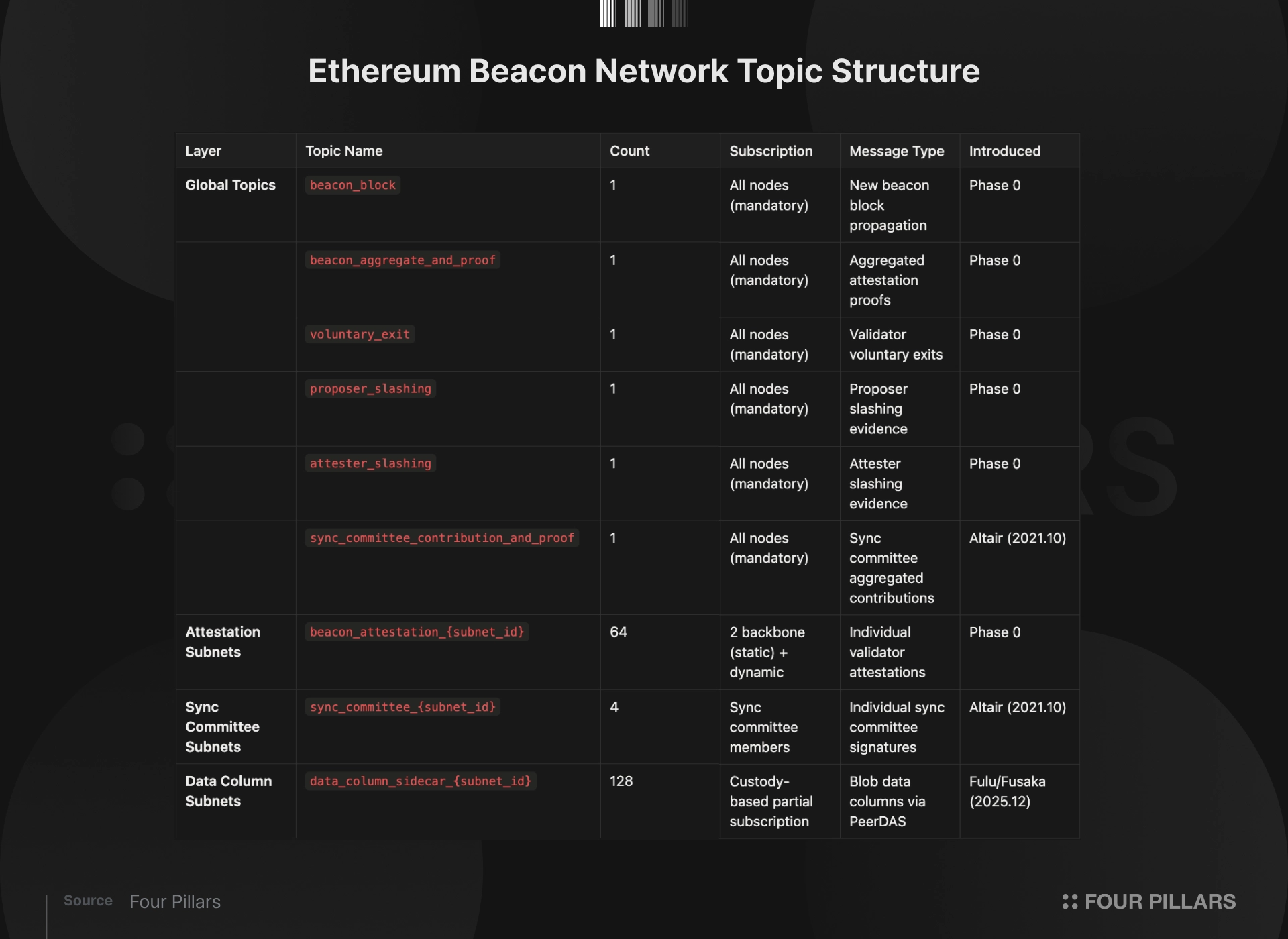

2.2.5 Global Topics, Attestation Subnets, Sync Committee Subnets, Data Column Subnets

The beacon network's GossipSub topics are organized into four layers. After the Fusaka hard fork, the current mainnet beacon network topic structure is as follows.

Global topics must be subscribed to by all nodes. Among these, beacon_block and beacon_aggregate_and_proof account for most of the bandwidth.

Attestation subnets (64) are a structure for distributed processing of attestations from over 1 million validators. Attestations are divided among 64 committees and propagated only to the corresponding subnet topic, so each node only processes a fraction of total attestation traffic.

Sync committee subnets (4) were introduced in the Altair hard fork for light client support. The sync committee, composed of 512 validators, rotates approximately every 27 hours (256 epochs) and submits signatures on the current chain head every slot. These signatures are propagated through 4 subnets (sync_committee_{0-3}), then aggregated and broadcast to the global topic. Light clients can verify the chain head using only this aggregated signature, enabling Ethereum state tracking without a full node.

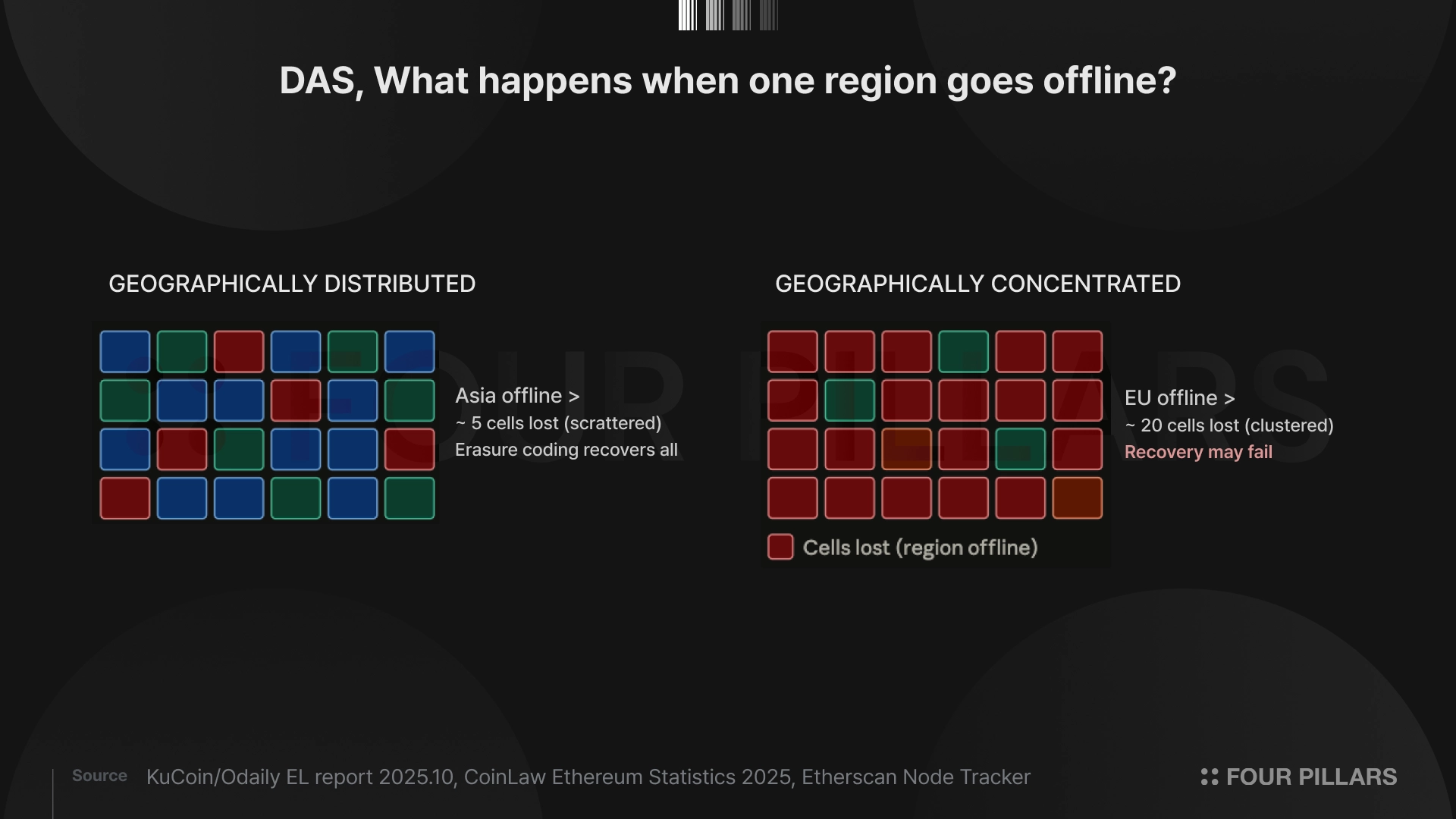

Data column subnets (128) were introduced with PeerDAS (EIP-7594) in the Fusaka hard fork as the current mainnet's blob data propagation structure, replacing the blob_sidecar_{subnet_id} introduced in Deneb. PeerDAS splits blob data into 128 columns with erasure coding applied, enabling original data reconstruction from just a subset of columns. Each node custodies only a few columns determined by its node ID and verifies the remaining columns by sampling from peers.

Nodes operating validators with 4,096 ETH or more are classified as supernodes and must custody all 128 columns. This is not merely an imposed burden but a key design element supporting PeerDAS's safety. Reconstructing erasure-coded data to its original form requires at least one supernode in the network, and supernodes also serve the role of redistributing missing columns to other nodes. The 4,096 ETH threshold effectively assigns infrastructure responsibility proportional to economic stake for large stakers with the greatest interest in network health. Their higher bandwidth and storage capacity is what guarantees the network's overall data availability.

Geographic distribution becomes even more critical in this structure. Since each node holds only a portion of the total data, columns must be evenly distributed across the network for any column to be quickly sampled. If nodes are concentrated in a specific region, a network outage in that region could render multiple columns simultaneously inaccessible.

A backbone subscription mechanism exists for stable operation of each subnet. Every beacon node must statically subscribe to 2 subnets determined deterministically by its node ID. This forms the "skeleton" of each subnet, maintaining message relay infrastructure even when no validators are assigned to that subnet in a given epoch. When a validator must perform attestation duties in a specific slot, it finds peers for that subnet via the attnets bitfield in the ENR (Ethereum Node Record) and connects through dynamic subscription.

The implication is clear. Approximately 200 to 500 backbone nodes per subnet are needed to ensure stable message propagation, and the geographic distribution of these backbone nodes directly determines each subnet's latency characteristics.

2.2.6 Request-Response Domain

Separate from the gossip domain, the request-response domain is a pattern for directly requesting specific data from a particular peer. This includes requesting a beacon block for a specific root hash, or asking for a list of blocks matching a slot range. Responses are encoded in Snappy-compressed SSZ (Simple Serialization) bytes. This domain is mainly used for node synchronization and data recovery, and while propagation speed is not as critical as in the gossip domain, it affects the bootstrapping speed of newly joining nodes.

Looking purely at network performance, having peers concentrated in a single region is advantageous.

In GossipSub, message propagation time is determined by "number of hops x latency per hop." When nodes in a Frankfurt data center communicate with each other, per-hop latency is under 1ms. In contrast, the one-way trip from Frankfurt to Seoul takes approximately 120 to 150ms. Given that block proposals, attestation votes, and aggregation must all complete within Ethereum's 12-second slot time, geographic distance between nodes is a clear handicap.

A 2025 empirical study published in the Cluster Computing journal (Mohandas-Daryanani et al.) supports this. Nodes in Oceania and Southeast Asia showed higher latency distributions when receiving block messages, with the Sydney-based node seeing 10% of block messages arriving after 4 seconds. Network participation accuracy directly translates to rewards and penalties on Ethereum. Consequently, the Sydney node received 10% lower average rewards compared to other regions and had the highest block proposal failure rate at 4%.

If Ethereum were simply a high-performance database, the optimal choice would be to place all nodes in an AWS data center in Virginia. In practice, many blockchains, especially those targeting high performance, show geographically concentrated validator distributions.

Source: Solanabeach

Source: Aptos

But Ethereum is not a simple high-performance database. Ethereum aspires to be "trustless, neutral, global infrastructure." Under this goal, geographic distribution requires accepting tradeoffs with performance optimization. The reasons are threefold.

First, geographic diversity and censorship resistance. In August 2022, after the US OFAC placed Tornado Cash on the sanctions list, the share of blocks produced by OFAC-compliant MEV-Boost relays reached 79%. A single jurisdiction's regulatory decision controlled transaction inclusion across the entire network. Community pushback and the emergence of non-censoring relays brought this ratio down to 27% by mid-2023, but the incident was deeply instructive. When nodes and infrastructure are concentrated in a single jurisdiction, a single government decision can threaten the network's neutrality.

Second, network resilience. Nodes distributed across diverse jurisdictions ensure that even if a specific group of nodes goes offline, the network can continue normal operation. Geographic distribution is insurance against single-region failures such as natural disasters, submarine cable cuts, or politically motivated internet shutdowns.

Third, the structural diversity demanded by Ethereum's P2P protocol itself. This point will be examined in depth in the following section.

The following characteristics demonstrate that geographic diversity in Ethereum's P2P protocol is not "nice to have" but a necessary design prerequisite.

3.3.1 Bridge Dependency in Mesh Topology

When nodes concentrate in a specific region, latency within that region drops, but nodes outside the cluster depend on a few "bridge nodes" for messages. If these bridge nodes fail, the entire peripheral region's message reception is cut off. The more geographically distributed nodes there are, the richer the alternative paths within the mesh topology, reducing single-bridge dependency.

3.3.2 Geographic Skew Risk in Subnet Backbones

Each of the 64 attestation subnets requires backbone nodes. Since subnet assignment is determined deterministically based on node ID, if the overall network's nodes are geographically concentrated, specific subnets' backbones will skew toward specific regions. If validators assigned to those subnets are located in other regions, additional latency occurs in attestation propagation.

3.3.3 Geographic Echo Chambers Created by Peer Scoring and the Mesh Exclusion Spiral

As examined in Section 2.2.4 (Peer Scoring), GossipSub's peer scoring awards high scores to peers that deliver messages quickly and accurately, and prunes low-scoring peers from the mesh. When this mechanism combines with geographic concentration, a self-reinforcing vicious cycle emerges.

Consider a node located in Asia as an example. With 120 to 150ms latency to the European/North American node hubs, it structurally receives and delivers the same messages later than European nodes. This lowers the "first message delivery" score. As scores drop, the probability of being excluded from key topics' meshes increases, and mesh exclusion means receiving messages even later, further lowering scores. The cycle repeats: mesh exclusion, late reception, lower score, further mesh exclusion.

Simultaneously, in node-dense regions like Europe and North America, the opposite virtuous cycle operates. Nearby peers exchange messages quickly, boosting each other's scores, and high scores ensure stable inclusion in meshes across multiple topics. The result is an "echo chamber" where geographically close nodes select each other as high-score peers, while peripheral region nodes are structurally marginalized in peering.

Critically, having many peers does not solve this problem. Even if an Asian node is connected to 150 peers, if it fails to be included in the mesh (6 to 12 peers) for most topics, its practical performance can be lower than a European node that is in the mesh. It remains a "periphery peer" limited to IHAVE/IWANT exchanges in the gossip layer.

The structural solution is to increase node density within Asia to form local meshes. When Asian nodes form meshes with low latency among themselves, their peer scores rise, enabling them to become competitive peers in the global mesh. An Asian node cluster connecting Seoul-Tokyo-Singapore-Hong Kong has the potential to become not just geographic diversity, but a new hub in the global GossipSub mesh topology.

The effects of geographic concentration extend beyond validators to ordinary users.

From a user sending transactions, more nearby nodes mean faster mempool arrival, which affects the speed of block inclusion. If tens of millions of users in Asia using dApps have their transactions physically travel to European/North American node hubs before propagation even begins, this represents a structural imbalance in user experience.

For DeFi protocols, this latency difference is even more sensitive. In an environment where price oracle updates, liquidation triggers, and arbitrage opportunity capture compete on a millisecond basis, requiring users in certain regions to accept structurally disadvantageous latency is incompatible with the principle of network neutrality.

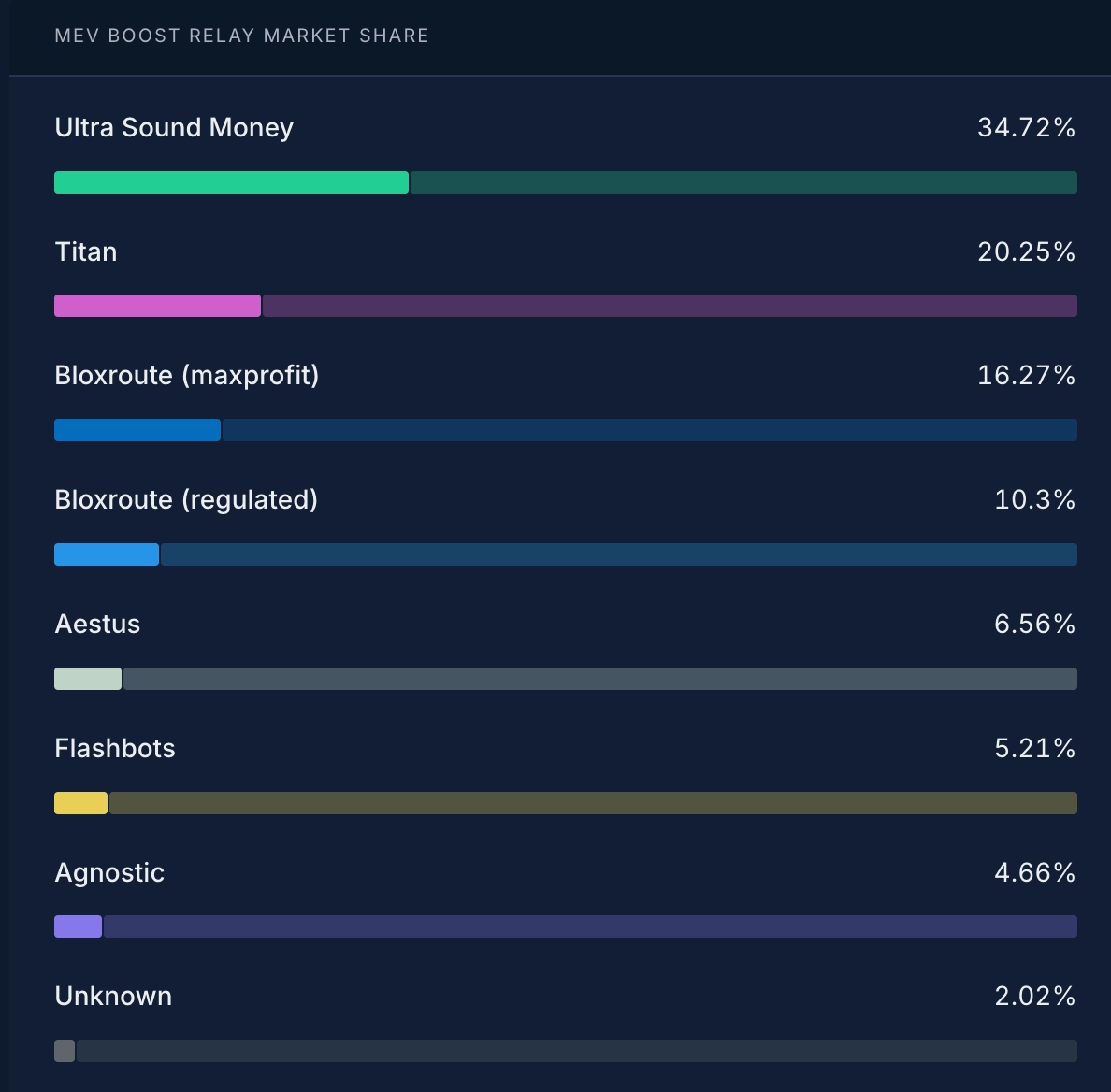

Worse, at the current time, MEV relays do not even exist in Asia, Africa, or South America. All major relays (Flashbots, bloXroute, Ultra Sound, Titan, etc.) operate from the US/Europe, meaning validators in non-Western regions must accept additional latency even when proposing blocks. In practice, when running Ethereum validators in Asia, most cases of missing high-value blocks delivered by MEV Boost relays were caused by latency issues with the relays.

Source: Rated.network

4.1.1 Execution Layer

Of approximately 10,475 EL nodes, the US accounts for over 30%, Germany 13.13%, and the UK 4.47%. The top 3 countries (US, Germany, UK) alone represent roughly half the total, all Western jurisdictions. In Asia, Singapore stands out at approximately 6.5%, while South America, Africa, and the Middle East combined amount to just a few percent.

4.1.2 Regional Validator Distribution

The most granular public data on geographic validator distribution comes from Lido's quarterly VaNOM report. Analyzing Lido's Q4/2025 data (332,117 validators), which represents approximately 23% of total Ethereum staking, reveals the concentration structure clearly.

Europe dominates with 199,416 (60.0%), with Germany alone at 67,787 (20.4%) in first place. North America accounts for 61,798 (18.6%), bringing Europe + North America to 78.7% combined. Asia-Pacific has 57,530 (17.3%), but Australia (17,098, 5.1%) and Singapore (16,532, 5.0%) account for more than half of that.

South America (5,784, 1.7%) and Africa (6,967, 2.1%) together account for less than 4%. Asia, home to 60% of the world's population, and Africa and South America, where crypto adoption is growing fastest, are effectively absent from the consensus infrastructure.

4.1.3 Vulnerability of Single-Operator Dependency

The most dramatic illustration of this concentration risk is South Korea. At Q4/2025, South Korea had 8,886 validators, ranking third in Asia within Lido, but this figure was effectively dependent on a single operator (A41). When A41 ceased operations in early 2026, validator nodes operating from South Korea essentially disappeared. This is not a Korea-specific problem but exposes the structural vulnerability of non-Western regional infrastructure built on a small number of operators.

4.1.4 Cloud Infrastructure Provider Concentration

Cloud infrastructure follows the same pattern. 35.53% of EL hosting nodes run on AWS, 13.75% on Hetzner (Germany), and 9.69% on OVHcloud (France). For Lido's curated module, public cloud accounts for 47.7%, with dedicated servers at 26.4% and bare metal making up the remainder. Non-Western cloud providers like Alibaba Cloud and Tencent Cloud have negligible presence. Not only the geographic distribution of nodes but the infrastructure hosting them is concentrated in the West: a double concentration structure.

The most frequently cited barrier to running validators outside Europe/North America is latency. However, this barrier can be largely overcome through architectural-level efforts.

According to the Cluster Computing study cited earlier, a node operated in Singapore recorded 0% block proposal failure rate alongside Frankfurt and Toronto. The problematic location was Sydney (Oceania), much farther from the network center. This suggests that not just physical distance from the network center but also the quality of local internet infrastructure and node density play compound roles. Major internet hubs on each continent, such as the East Asian backbone connecting Seoul-Tokyo-Singapore and the South American backbone connecting Sao Paulo-Buenos Aires, have the potential to reduce the latency gap with Europe/North America to a manageable level.

The same study also found that certain consensus clients responded effectively in high-latency environments while others did not. This means client selection and environment tuning can offset geographic disadvantages to some degree.

Running validators outside Europe/North America provides structural value to the entire Ethereum network beyond individual operator profitability.

4.3.1 Genuine Diversification of Censorship Resistance

The 1-out-of-N honesty assumption of FOCIL, which will be examined in more detail in the next section, works most effectively when the 17 inclusion list committee members are distributed across different jurisdictions. Since US OFAC sanctions do not directly apply to validators in South Korea, Singapore, Brazil, or South Africa, they can serve as the "last line of defense" for jurisdictional diversity.

4.3.2 Geographic Balance of GossipSub Subnets and Data Columns

The more geographically diverse the backbone nodes for the 64 attestation subnets and 128 data column subnets, the better subnet/column availability is preserved during regional outages. This becomes even more critical under PeerDAS.

4.3.3 Global Balance of P2P Mesh Topology

The peer score echo chamber effect analyzed in Section 3 can only be resolved by forming node clusters in non-Western regions. If healthy node hubs exist in Asia, South America, and Africa, GossipSub message propagation paths achieve global balance and the overall network's latency distribution improves.

4.4.1 CL Client Selection and Node Tuning for Regional Characteristics

As the Cluster Computing study cited in Section 3 demonstrates, performance varies significantly by client choice even at the same geographic location. Strategies for optimizing attestation timing in high-latency environments (attestation submission delay adjustment, block reception timeout settings, etc.) differ by client, and some clients respond effectively even far from the network center. For those running validators in non-Western regions, the first step is selecting the client that shows the highest attestation accuracy in their network environment, based on measured data.

4.4.2 Building Regional Peering Networks

This is the most direct way to break the peer score spiral analyzed in Section 3. The key is forming local meshes between major hub cities on each continent. For example, if an Asian node cluster forms between Seoul-Tokyo-Singapore-Hong Kong (inter-city latency 30 to 70ms), or a South American cluster between Sao Paulo-Buenos Aires-Santiago, mutual peer scores within the local mesh rise, enabling these nodes to become competitive peers in the global mesh as well. Such regional clusters have the potential to become new hubs in the global GossipSub mesh topology, going beyond simple geographic diversity.

4.4.3 Leveraging DVT (Distributed Validator Technology)

DVT, where operators across multiple regions manage a single validator key in a distributed manner, can spread individual operators' geographic disadvantages across a team. When operators in Asia and Europe, or South America and North America, form DVT clusters, they can combine latency advantages from both sides while also securing jurisdictional diversity. Lido's Simple DVT module already maintaining balanced distribution across Europe, the Americas, and Asia-Pacific is a real-world example of this approach.

However, DVT does not eliminate geographic latency problems. In fact, it can exacerbate them significantly. DVT protocols like SSV and Obol perform consensus through separate P2P networking channels between cluster nodes. When cluster nodes must gather signatures on the same head, inter-cluster node latency directly translates to internal consensus delay. For example, the 120ms round-trip latency between Seoul and Frankfurt applies directly to DVT internal consensus, slowing the cluster's attestation submission timing. As OisinKyne from Obol pointed out on ethresear.ch, when some of the 7 DVT operators fail to see the proposer's block in time, head votes diverge and rewards decrease. Ultimately, DVT clusters also achieve faster internal consensus when composed of geographically closer nodes, which aligns with the importance of regional local mesh formation discussed earlier.

A potential long-term solution to this problem is the Native DVT proposal by Vitalik Buterin in January 2026. Native DVT is covered in "5.3 Native DVT: Protocol-Embedded DVT That Reduces the P2P Cost of Geographic Distribution."

4.4.4 Community and Governance Participation

Technical infrastructure alone is not enough. As demonstrated by Lido's Wave 5 onboarding explicitly prioritizing "operators outside EU and US," staking protocols are actively pursuing geographic diversity. Active participation by non-Western operators and researchers in Ethereum's governance processes, including EIP discussions, All Core Devs calls, and the Ethereum Magicians forum, is the most direct path to reflecting global perspectives in protocol design. The reality is that the current Ethereum community is concentrated in the Western world.

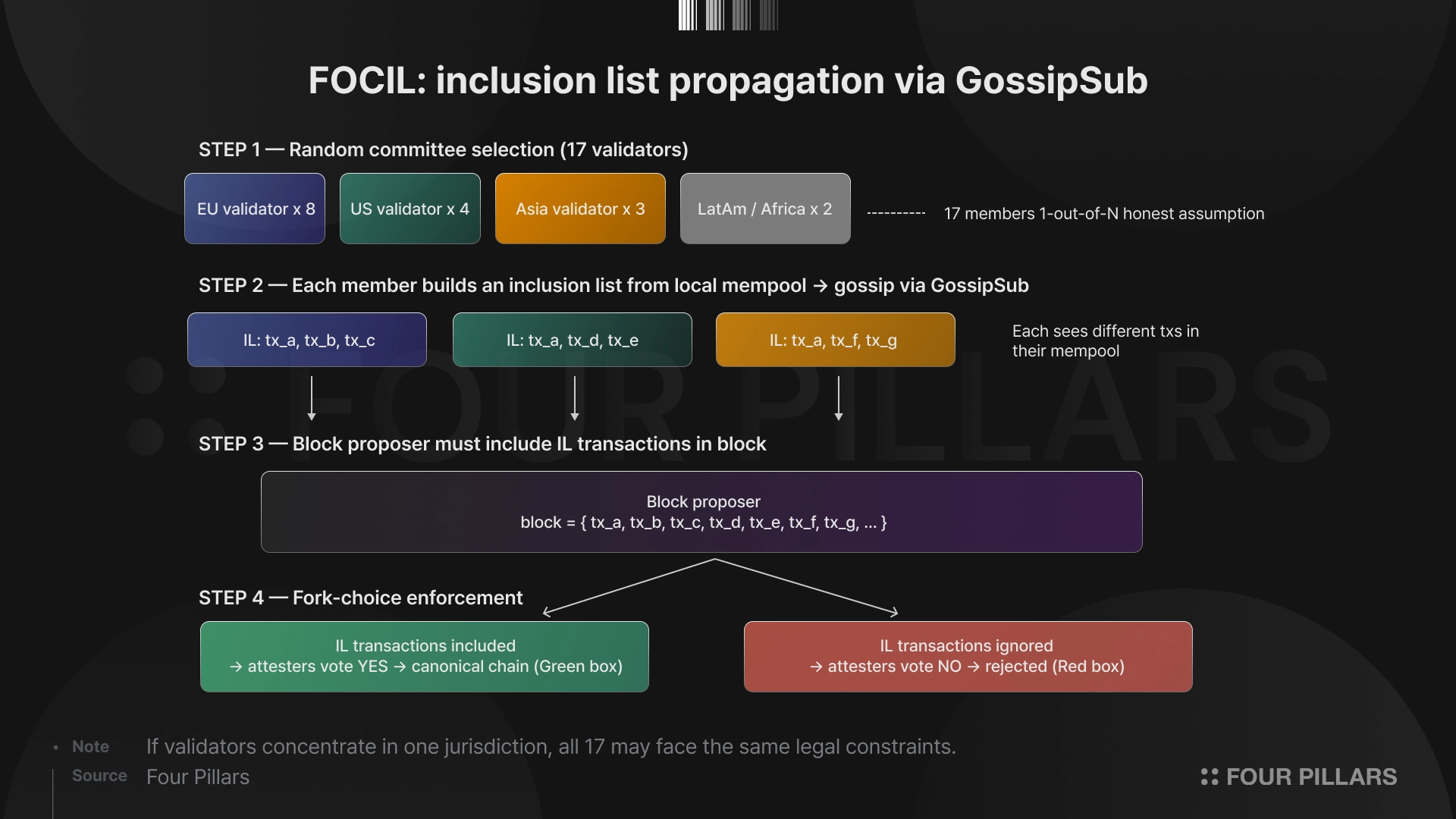

FOCIL (Fork-Choice Enforced Inclusion Lists, EIP-7805), confirmed as the consensus layer headline for the Hegota hard fork in H2 2026, elevates the importance of geographic decentralization to the protocol level from a censorship perspective.

FOCIL works as follows. Each slot, 17 validators are randomly selected for the inclusion list (IL) committee. Each committee member creates an IL from transactions in their mempool view and propagates it to the P2P network via GossipSub. The block proposer must include transactions from these inclusion lists in their block, and attesters refuse to vote for blocks that violate this requirement. The key point is that this mechanism is enforced by the fork-choice rule. A block that ignores ILs cannot become canonical.

The P2P network's geographic structure is directly relevant here. Since ILs must be propagated via P2P in the early part of the 12-second slot, for a committee member's inclusion list to reach the entire network quickly, that node must be included in the active mesh within the GossipSub mesh topology, not on the periphery. The peer score problems analyzed in Section 3 apply here as well. If non-Western nodes are pushed out of the mesh, even when selected for the committee, their IL propagation is delayed and FOCIL's practical effectiveness is weakened.

Additionally, FOCIL operates on a 1-out-of-N honesty assumption. Only 1 out of 17 members needs to honestly create an IL for censorship to be prevented. However, for this "one honest includer" to exist, the 17 members must be distributed across different jurisdictions with different legal environments. If the majority of validators are in the same jurisdiction and that jurisdiction prohibits certain transactions, all 17 members may be subject to the same legal constraints. This is why FOCIL requires geographic decentralization as a prerequisite.

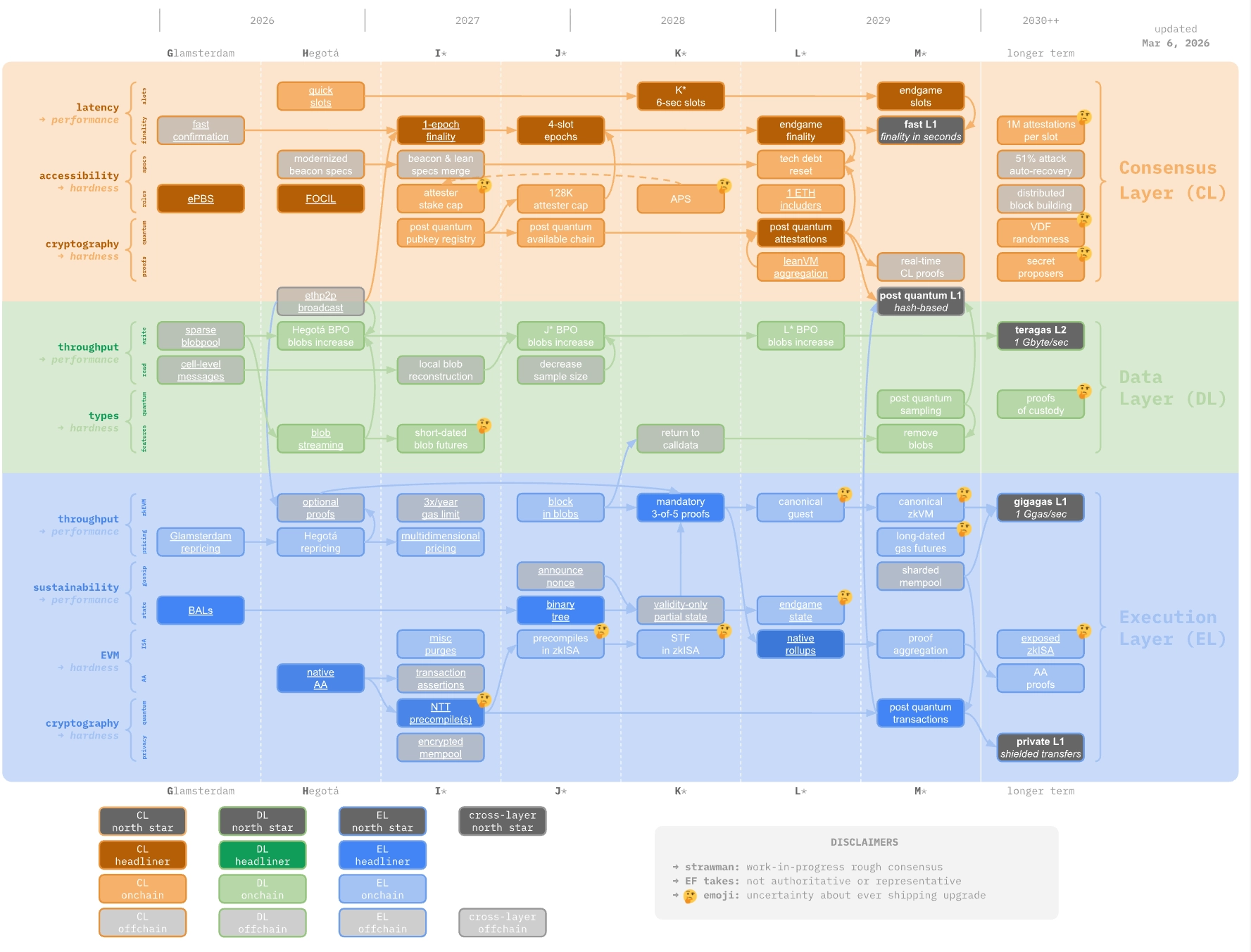

In February 2026, the EF Protocol team released Strawmap. A portmanteau of "strawman" and "roadmap," this document visualizes all Ethereum L1 upgrades across three layers: consensus (CL), data (DL), and execution (EL) on a single timeline, to be implemented through 7 hard forks at approximately 6-month intervals through 2029.

Source: strawmap.org

The five North Stars presented by Strawmap are:

Fast L1: Slot time reduction and sub-second finality

Gigagas L1: 1 gigagas/sec (~10,000 TPS) via zkEVM and real-time proving

Teragas L2: 1GB/sec (~10M TPS) via data availability sampling

Post-Quantum L1: Quantum resistance through hash-based signatures

Private L1: Privacy transfers at the L1 level

Examining each fork's impact on P2P networking and geographic diversity:

5.2.1 Fusaka (December 2025, completed)

PeerDAS (EIP-7594) restructured blob propagation into 128 data column subnets. Through BPO1/BPO2, blob count was expanded to a maximum of 21, making the geographic distribution of each node's custodied columns a key variable in network safety.

5.2.2 Glamsterdam (H1 2026)

ePBS to be introduced in Glamsterdam separates block proposers and builders at the protocol level, reducing relay dependency. This is a significant change from a P2P perspective, as it opens the possibility for block propagation paths to be more directly distributed through GossipSub meshes, moving away from the current structure where most blocks pass through a handful of relays. Additional BPOs for blob parameter increases will continue.

5.2.3 Hegota (H2 2026)

FOCIL, planned for Hegota, uses inclusion list P2P propagation as the core mechanism of censorship resistance, as analyzed in Section 5.1.

5.2.4 I~L** Forks (2027-2029)

The biggest P2P impact from subsequent forks is the progressive reduction of slot time. Buterin proposed "gradually by a factor of the square root of 2" (12, 8, 6, 4, 3, 2 seconds). Even reducing slots from 12 to 8 seconds compresses the timing margin for message propagation relative to the GossipSub heartbeat (currently 0.7s) by roughly 33%. This could further intensify the peer score echo chamber effect analyzed in Section 3. Nodes farther from network hubs will find it increasingly difficult to submit attestations on time within shorter slots, accelerating the spiral of score decline and mesh exclusion.

At the same time, the transition to Lean Consensus (zkAttester client) and from Gasper to Minimmit (1-round BFT finality) will significantly reduce validator hardware requirements, creating an opposing force that lowers entry barriers in non-Western regions.

Strawmap is a roadmap that demonstrates the dual dynamic of "faster consensus demands more distributed infrastructure, while simultaneously lowering entry barriers." Slot reduction highlights regional latency gaps, while zkAttester reduces node operating costs to enable participation from more diverse regions. Ethereum's P2P network future will be determined by the balance between these two forces.

In January 2026, Vitalik Buterin posted the Native DVT proposal on ethresear.ch. As examined in Section 4, current DVT solutions (SSV, Obol) operate separate P2P consensus protocols within clusters, meaning inter-node latency affects every operation. Native DVT is a design that fundamentally eliminates this intra-cluster P2P overhead.

Native DVT's design is straightforward. A validator with at least n times the minimum balance specifies up to n keys and a threshold m (m is less than or equal to n, n is at most 16), generating n "virtual identities." These virtual identities are always assigned together to roles (block proposer, committee, subnet), and from a protocol accounting perspective, m or more signatures authenticating the same action are required for that action to be treated as valid. From the user's perspective, DVT staking becomes as simple as "independently running n standard client nodes."

This Native DVT proposal impacts geographic diversity in P2P networks in two key ways.

5.3.1 Resolving Intra-Cluster P2P Latency Issues

In current DVT architecture, the 120ms round-trip latency between Seoul and Frankfurt is directly reflected in intra-cluster consensus. This makes geographically distributed DVT operation difficult. In Native DVT, each node signs independently and the protocol verifies thresholds after the fact, meaning attestations require no intra-cluster communication and add zero additional latency. Block proposals also require only a single leader round, dramatically reducing the practical performance penalty for geographically distributed DVT clusters.

5.3.2 Validator Distribution Through Lower Entry Barriers

Buterin himself stated he wrote this proposal after personally experiencing the complexity of Vouch/Dirk/Vero setup, underscoring how current DVT's setup burden is a major reason operators give up self-staking and delegate to staking service providers. If Native DVT is implemented, large stakers (institutions, whales) will have greater incentive to transition to self-staking, which directly improves the geographic distribution of validators.

That said, Native DVT does not completely solve the geographic distribution problem. The more geographically distributed the nodes, the higher the probability that some nodes see the proposer's block late and attest to a different head, causing "head vote disagreement." In an m=5, n=7 configuration, if 3 nodes see the block late and vote for a missed slot, neither side reaches the threshold and rewards drop to zero. There are also concerns that virtual identities could increase the resources needed for consensus.

Nevertheless, Native DVT is a proposal that can reduce at the protocol level the core problem current DVT faces: "the P2P cost of geographically distributed DVT operation."

As discussed in Section 2, PeerDAS (EIP-7594), activated in Fusaka (December 2025), fundamentally changed the P2P propagation structure for blob data. By transitioning from 6 blob_sidecar subnets to 128 data column subnets and introducing a structure where each node custodies only a few columns, it reduced individual node bandwidth burden while safely expanding blob count to the current target of 14 and maximum of 21.

PeerDAS is the first step toward Full DAS (full Danksharding). While PeerDAS applies 1D erasure coding to each blob individually, Full DAS applies 2D erasure coding across the entire blob matrix by both rows and columns, providing much stronger data reconstruction resilience and more efficient verification. Buterin stated that "PeerDAS is the first working implementation of data sharding" and that "over the next two years, we will refine the PeerDAS mechanism and carefully scale it, and when ZK-EVMs are mature, turn it inward to scale Ethereum L1 gas as well." Strawmap's Teragas L2 North Star (1GB/sec, ~10M TPS) cannot be reached through PeerDAS's approximately 8x scaling alone, making evolution to Full DAS essential.

In this evolution, geographic distribution becomes even more critical. While the geographic distribution of 128 columns was already key in PeerDAS, Full DAS requires sampling across both rows and columns of a 2D matrix, meaning nodes in diverse regions must hold different cells for sampling reliability. As blob counts expand through BPO3, BPO4 toward 128, and eventually transition to Full DAS, the risk of multiple cells becoming simultaneously inaccessible due to regional outages when nodes are concentrated in specific areas becomes far greater than today. PeerDAS and its successor Full DAS, like FOCIL, are designs that structurally presuppose geographic distribution of nodes.

The introduction of ePBS in H1 2026 will change the current MEV structure. Embedding the relay intermediary into the protocol reduces trust dependency on relay operators. However, the concentration of block builders (top 3 builders constructing over 90% of blocks) is not solved by ePBS alone, and this is the area FOCIL aims to complement. FOCIL's inclusion lists serve the role of forcibly including transactions that builders attempt to exclude.

Starting with FOCIL's activation in H2 2026, "where you operate your validator" becomes a variable that directly affects the protocol's security. The moment a non-Western validator selected for the IL committee includes a transaction censored by Western-based relays in their IL, FOCIL's censorship resistance mechanism comes to life.

If the Native DVT proposal is incorporated into the protocol, the entry barrier for DVT staking will drop dramatically, potentially accelerating large stakers' transition to self-staking. This would become a structural force for distributing the validator concentration currently held by a small number of staking providers.

Regarding the roadmap through 2029 presented by Strawmap, the following points directly impact geographic decentralization.

6.2.1 P2P Impact of Slot Time Reduction

The progressive reduction proposed by Strawmap (12s, 8s, 6s, 4s) compresses the propagation timing margin in GossipSub meshes. As slots shorten, the impact of latency on peer scoring increases, and without sufficient node density on each continent, mesh exclusion problems for non-Western nodes could intensify.

6.2.2 After FOCIL Activation

When FOCIL activates in Hegota (H2 2026), the key question will be how IL P2P propagation works on actual mainnet. IL size (currently 8KB), compatibility with EIP-8141, and other specifications have been finalized, while incentive/penalty mechanisms for IL committee members remain an open research question. Whether perspectives from validators across diverse regions are reflected in this design process will be important. In particular, we should monitor whether the GossipSub mesh exclusion issues discussed earlier also affect FOCIL's effectiveness.

6.2.3 Post-Pectra Validator Consolidation (2,048 MaxEB)

The Pectra upgrade in May 2025 enabled consolidation of up to 2,048 ETH per validator. Whether this deepens centralization among large staking operators or instead improves efficiency for smaller operators and promotes distribution needs to be tracked with actual data. Reducing validator count could lower participant density per attestation subnet, affecting backbone stability. At the same time, an increase in supernodes with 4,096 ETH or more could enhance data column custody stability.

6.2.4 The ZK Transition Roadmap

Strawmap's Lean Consensus aims to transition validators from re-executing transactions to verifying ZK proofs via zkAttester clients. If realized, the computing resources required for validator operation would decrease significantly, lowering entry barriers in non-Western regions where high-spec hardware is harder to obtain.

6.2.5 Regulatory and Environmental Changes

South Korea's virtual asset regulations are focused on exchanges and investor protection, with the legal status of validators/node operators still unclear. How regulatory frameworks in key jurisdictions like Singapore, Japan, Hong Kong, Brazil, and the UAE evolve regarding staking infrastructure will determine the growth pace of validator ecosystems in each region.

The shift toward institutional staking in Ethereum also warrants attention. We should watch whether large-scale staking by DAT (Digital Asset Treasury) companies, represented by Bitmine, and the proliferation of Ethereum staking ETFs further deepen geographic concentration of validators. Bitmine's staked ETH exceeds 3 million as of March 2026, and its validator infrastructure company MAVAN, an acronym for Made-in-America Validator Network, broadcasts its American identity right in its name.

Ethereum's decentralization is not a state that is achieved once and done, but an action that requires continuous effort from participants. Even if FOCIL enforces censorship resistance at the protocol level, it is only a half-measure if the physical infrastructure executing that protocol is concentrated in one place.

Ethereum's P2P network, composed of devp2p and libp2p, is fundamentally a system that grows stronger with more and more diverse participants. GossipSub's mesh topology, data column subnets, and peer scoring system are all designed to perform optimally with geographic distribution as a prerequisite. The roadmap Strawmap envisions through 2029 only strengthens this requirement.

Each network participant should act toward the vision they seek for Ethereum, rather than merely stating what it should look like.

The reason geographic distribution of Ethereum nodes has not been highlighted as a major issue in the Ethereum community so far is likely because the community's center of gravity is in the West, and the geographic concentration of nodes is also centered on Europe and North America. However, if Ethereum is to truly live up to its "world computer" vision, it must be possible to participate in the network on equal terms from anywhere in the world. An attestation submitted from Seoul should require the same effort as one from New York. An inclusion list from an Iranian validator selected for the FOCIL committee should propagate accurately. A node operated from Africa should contribute seamlessly to the custody of 128 data columns. That is the destination Ethereum, as a Peer-to-Peer network, must reach.

Dive into 'Narratives' that will be important in the next year